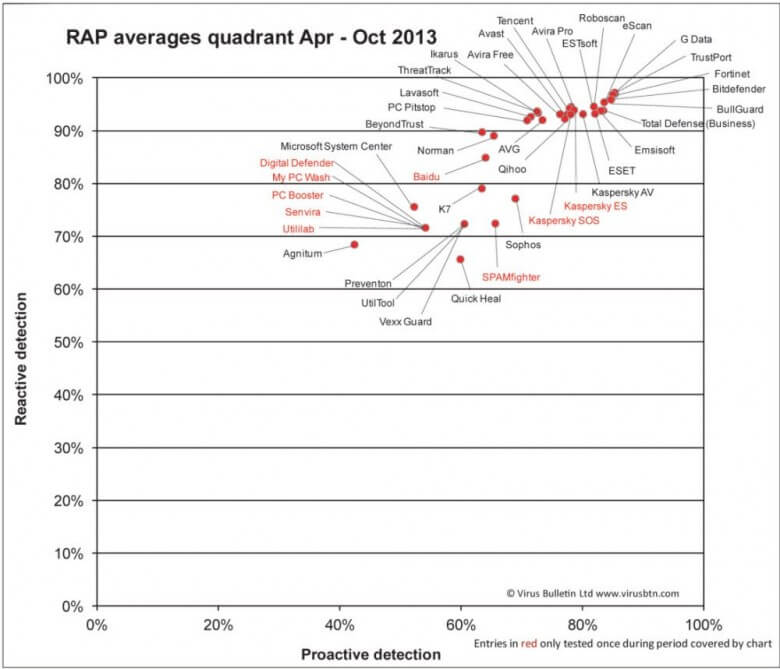

Virus Bulletin’s latest comparison chart, covering the period from April to October 2013, is the result of an interesting system which includes testing both reactive and proactive protection across a number of leading anti-virus products, albeit with several notable omissions.

Reactive Protection: describes the traditional antivirus method of combating malware via signatures stored in a definition database. This system generally requires prior knowledge of an existing threat before new signatures can be written and added to the database – a new threat is identified and the antivirus then ‘reacts’ by adding it to its protection base.

Proactive Protection: describes how well antivirus products deal with new, as yet unidentified malware, or zero day threats. This is generally achieved via heuristics engines – in other words, based on a particular pattern of behavior. A proactive system attempts to protect against malware before it’s been positively identified.

The test measures products’ detection rates over the freshest samples available at the time the products are submitted to the test, as well as samples not seen until after product databases are frozen, thus reflecting both the vendors’ ability to handle the huge quantity of newly emerging malware and their accuracy in detecting previously unknown malware.

*Products that entered only one of the comparatives used to generate the chart (or for which only one set of results are counted due to false positives in other tests) are marked in RED – this indicates that the score may be considered less reliable an indicator of detection capability than those for whom an average of measures across several tests are available.

Unsurprisingly, top scores are dominated by commercial anti-virus products, although a couple of the free solutions are not far behind. Notable omissions are Norton, Trend Micro, and McAfee. Apparently these three security giants did not choose to submit their products for testing.

Another disappointment, to me anyway, is that the Avast entry is not identified as either Free or Pro. In the absence of of any indication to the contrary, my best guess would be it refers to the Pro version. Anyway, I thought it was interesting to see how close the results for proactive protection were to those for reactive protection among the top products. It’s a lot closer than I would have expected and definitely a step in the right direction.

You can view a full rundown of how the report was compiled, methodology, etc. on the Virus Bulletin page here: http://www.virusbtn.com/vb100/rap-index.xml

Maybe I’m overlooking it, but I don’t see Panda Cloud AV among the group.

What about ZoneAlarm? They make a free antivirus/firewall

Hi, I’ve been using ‘paid for’ BullGuard for three years or more now on my home pc. I’m pleased to see they’re in the top bunch. This confirms my confidence in the expansive software and their techs who have supported me on occasion.

Still confidence using one of the top free AV such Qihoo internet security on my PC